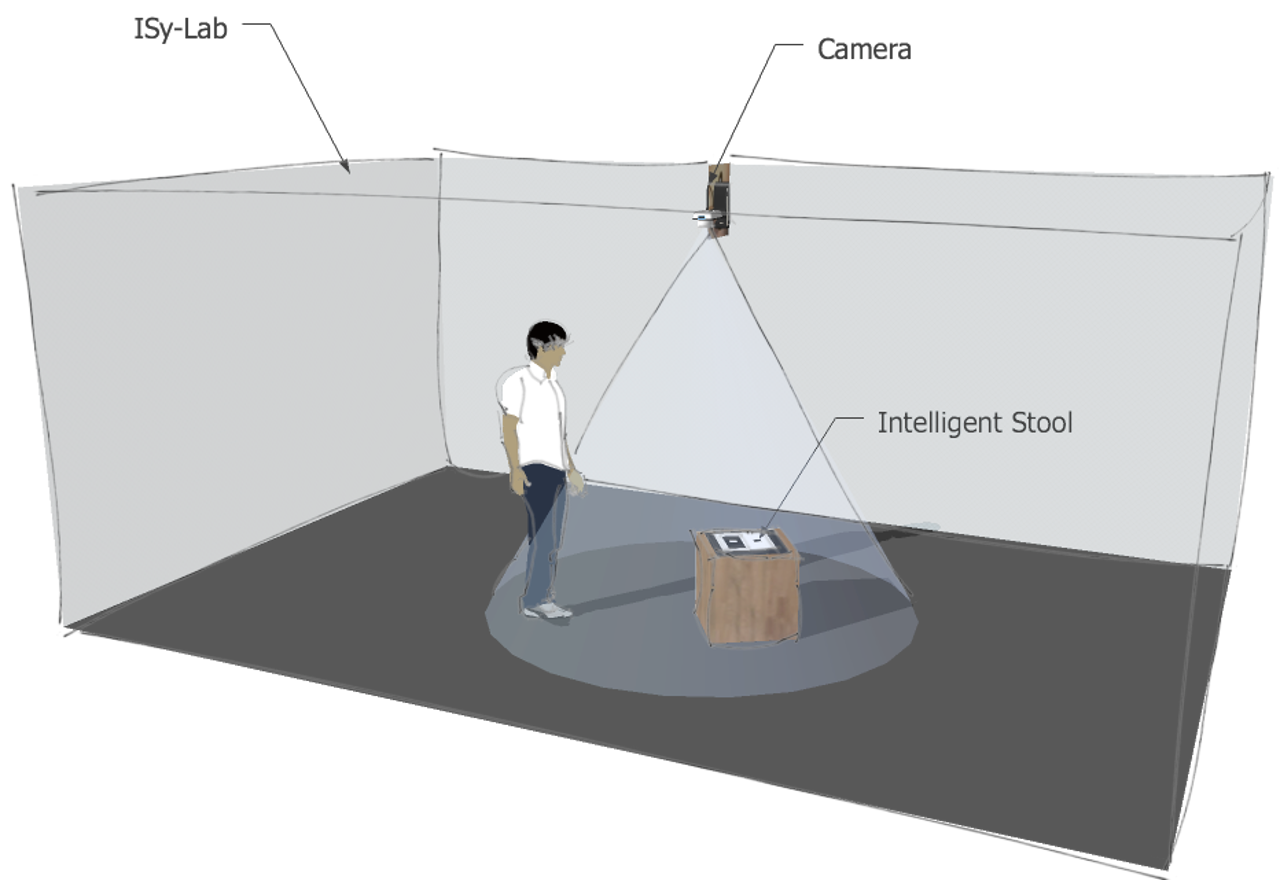

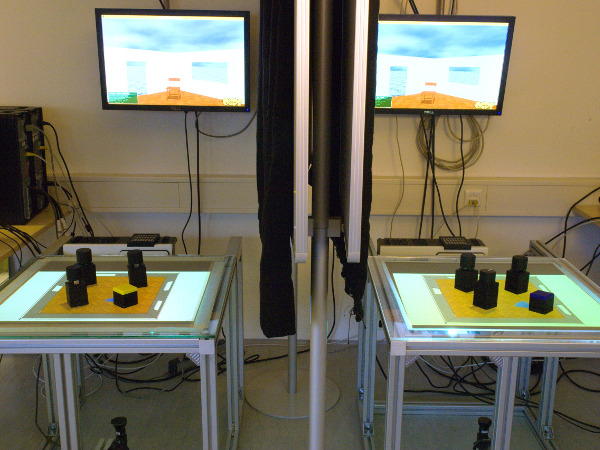

The task was to create a self-driving seat that can detect people in a room and offer itself for seating. A camera is installed under the ceiling for visual search and marker tracking. A table representing the room can be used to set tangible objects, which are monitored via a webcam. Such an object corresponds to an actual mobile seat in the room. The seat is controlled via a wireless connection based on coordinates calculated from the pictures of the webcam.

The seat was built with two motors and a robust corpus. The execution of remote control was successful. Due to problems in regards to tracking the seat via marker-tracking software, it can only be driven remotely. The Pioneer platform is running smoothly. Control via tangible objects works well and the placement of objects on the control-table results in the seat navigating towards appropriate positions in real room coordinates. The table for tangible objects was created and uses a webcam to track the movement of the objects, which correspond to the mobile platforms. Software components, such as the potentialplanner, are implemented and functioning.

Student Participants

- Andreas Kipp

- Dimitri Petker

- Matthias Schröder

- Dominik Weissgerber

Supervisor

- Eckard Riedenklau

Project Details

Date: Feb 28, 2010

Author: Eckard Riedenklau

Website: http://www.techfak.uni-bielefeld.de/ags/ami/research/taos/ims.shtml